Large language models like LLaMA, Mistral, and Falcon are trained on vast amounts of general text data. They perform impressively across a wide range of tasks, but they often fall short when applied to specialized domains – legal contracts, medical records, financial reports, or industry-specific terminology. The traditional solution was full fine-tuning: updating every parameter in the model using domain-specific data. The problem is that full fine-tuning requires enormous computational resources, making it inaccessible for most organizations.

Parameter-Efficient Fine-Tuning (PEFT) methods, specifically LoRA and QLoRA, change this equation entirely. They allow teams to adapt large models to specific business vocabularies at a fraction of the cost. For anyone enrolled in or considering a gen AI course in Pune, understanding these techniques is one of the highest-value areas of study in applied AI today.

Understanding the Problem With Full Fine-Tuning

A model like LLaMA-2 70B contains 70 billion parameters. Full fine-tuning requires storing and updating gradients for every single one of those parameters during training. On consumer-grade hardware, this is simply not feasible. Even on enterprise GPU clusters, the cost quickly runs into thousands of dollars for a single training run.

Beyond compute costs, full fine-tuning carries another risk: catastrophic forgetting. When a model is trained too aggressively on a narrow dataset, it can lose the general language understanding it developed during pre-training. The model becomes better at the specific task but worse at everything else.

PEFT methods address both of these problems by training only a small subset of parameters while keeping the original model weights frozen.

What Is LoRA and How Does It Work?

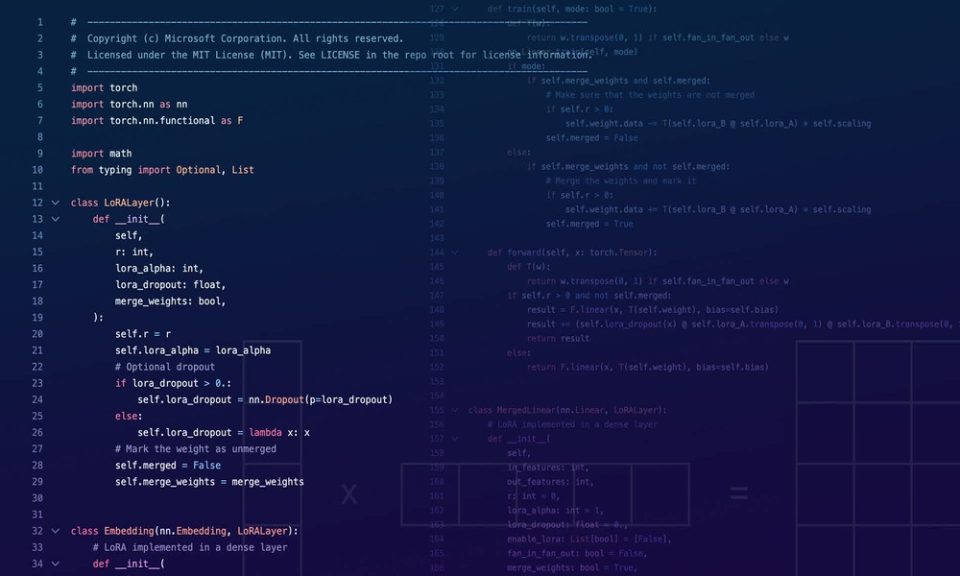

LoRA, which stands for Low-Rank Adaptation, was introduced by researchers at Microsoft in 2021. The core idea is mathematically elegant. Instead of updating the full weight matrix of a model layer, LoRA injects two much smaller matrices – called adapter matrices – into the layer. These matrices have a significantly lower rank than the original weight matrix, which means they contain far fewer parameters.

During training, only these adapter matrices are updated. The original model weights remain frozen throughout. At inference time, the adapter matrices are merged back into the original weights, so there is no added latency in the final deployed model.

In practice, LoRA can reduce the number of trainable parameters by over 99% compared to full fine-tuning, while achieving comparable performance on domain-specific tasks. This makes it possible to fine-tune a 7B or 13B parameter model on a single consumer GPU with 24GB of VRAM.

The technique is directly applicable to real business use cases – adapting a general-purpose model to understand legal clauses, medical terminology, or retail product catalogs – which is precisely the kind of hands-on content covered in a quality gen AI course in Pune.

QLoRA: Pushing Efficiency Even Further

QLoRA builds on LoRA by introducing quantization into the process. Quantization reduces the precision of model weights – for example, representing values in 4-bit format instead of the standard 16-bit or 32-bit format. This dramatically reduces the memory footprint of the base model.

In the QLoRA approach, the pre-trained model is loaded in 4-bit quantized format, which compresses its size significantly. LoRA adapters are then trained on top of this quantized base in 16-bit precision. The key innovation is that QLoRA uses a technique called NF4 (Normal Float 4), which preserves model quality despite the aggressive quantization.

The result is remarkable: with QLoRA, it is possible to fine-tune a 65B parameter model on a single 48GB GPU – hardware that a single researcher or small team can realistically access. This opens up serious model customization to organizations that previously could not afford it.

Key Differences Between LoRA and QLoRA

|

Feature |

LoRA |

QLoRA |

|---|---|---|

|

Base model precision |

16-bit (FP16/BF16) |

4-bit quantized (NF4) |

|

Memory requirement |

Moderate |

Very low |

|

Training speed |

Fast |

Slightly slower |

|

Output quality |

High |

Comparable to LoRA |

|

Best suited for |

Mid-size models |

Very large models |

Practical Considerations for Implementation

Implementing LoRA or QLoRA in a production workflow involves a few key decisions.

Choosing the rank value. The rank r in LoRA controls the size of the adapter matrices. Lower ranks use fewer parameters and train faster but may not capture enough domain-specific nuance. Ranks between 8 and 64 are common starting points, with experimentation needed for each use case.

Selecting target modules. LoRA adapters are typically applied to the attention layers of a transformer model – specifically the query and value projection matrices. Some implementations also target the feed-forward layers for additional expressiveness.

Dataset quality over quantity. Because PEFT methods train on a small parameter set, the quality and relevance of the fine-tuning dataset matters more than its size. A well-curated dataset of 5,000 domain-specific examples often outperforms a poorly filtered dataset ten times larger.

These implementation details are exactly the kind of practical knowledge that separates practitioners who can deploy AI systems from those who only understand them theoretically – and it is increasingly a focus area in advanced gen AI course in Pune programs.

Conclusion

LoRA and QLoRA have fundamentally lowered the barrier to domain-specific model adaptation. They make it practical for businesses of all sizes to fine-tune powerful language models on their own data, using accessible hardware, without sacrificing meaningful performance. As more organizations look to embed AI into specialized workflows, PEFT methods will be central to how that customization happens efficiently and affordably.